okay, #3 here.

i record a brilliant lecturer, and post the lectures/dialogues online for free.

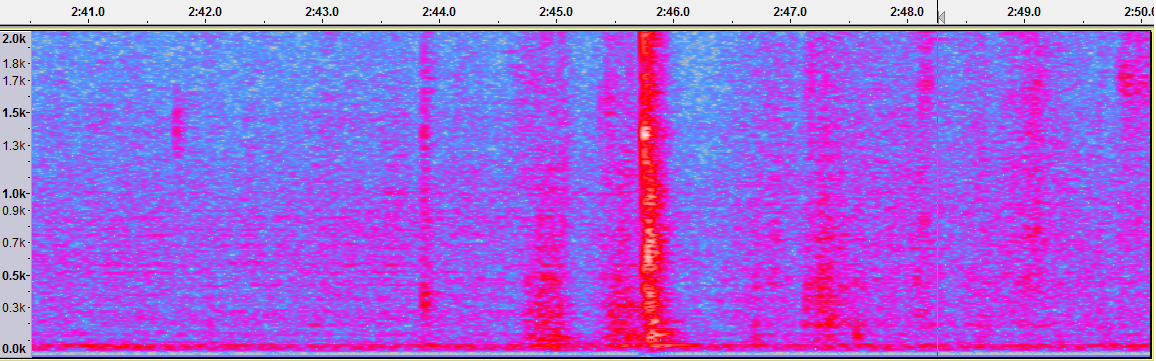

but unfortunately the surroundings are less than ideal (refrigerators, chimes, birds, airplanes, garbage trucks and street sweepers, coffee pot, etc. etc. etc.)

there are obvious recording strategies i have taken (better mikes – especially dynamic – and placed closer, etc.)

but then in editing the recording in audacity, to clean up and boost the signal-noise ratio for listeners (who will often be listening in their cars, without headphones, and in other less-than-ideal listening environments such as coffee shops), i also often use effects such as dynamic compression (the 3rd party one detailed at https://theaudacitytopodcast.com/chriss-dynamic-compressor-plugin-for-audacity/), noise reduction, low-cut / high-pass filters, and even simple de/amplification.

however, as far as i can tell, these tools are all based in various ways on amplitude and frequency. i would like something that would (again, not in real-time) simply reduce to 0 amplitude (silence) all sections which did not have voice detected. THEN, once that was done, any effects/filters which i applied (like those listed above) would obviously work MUCH better, because all the intervening junk (between voice segments) would be gone. (of course, the junk is still there DURING the speech segments too, but that is a different issue, and i can deal with it).

i myself had thought of programming something in nyquist (i do love the elegance of lisp’s, and emacs can make the matching parens colored), but i am super busy with tons of other projects. but it does sound (if i could find the time) like it would be a great learning experience, and a wonderful way to learn about audio. but then again, if someone programmed a Nyquist VAD already, i wouldn’t complain.

please let me know if anyone is still working on one.