Hello,

I work for a wilderness school and we are making youtube videos and video accompaniments to our books. Much of what we do is taping people sitting in a circle and talking, or standing around and putting on/FAQ’ing a workshop.

We have a lot of footage already stored, and much of it is pretty rough to work with. There are mosquitos (some flying right by the mic - LOUD!), birds, a crackling fire, and who knows what else mucking up the dialogue. I thought I could get away from learning how to manipulate frequencies and do noise reduction by synchronizing our external voice recorder, but - alas, in some instances it does not make a difference.

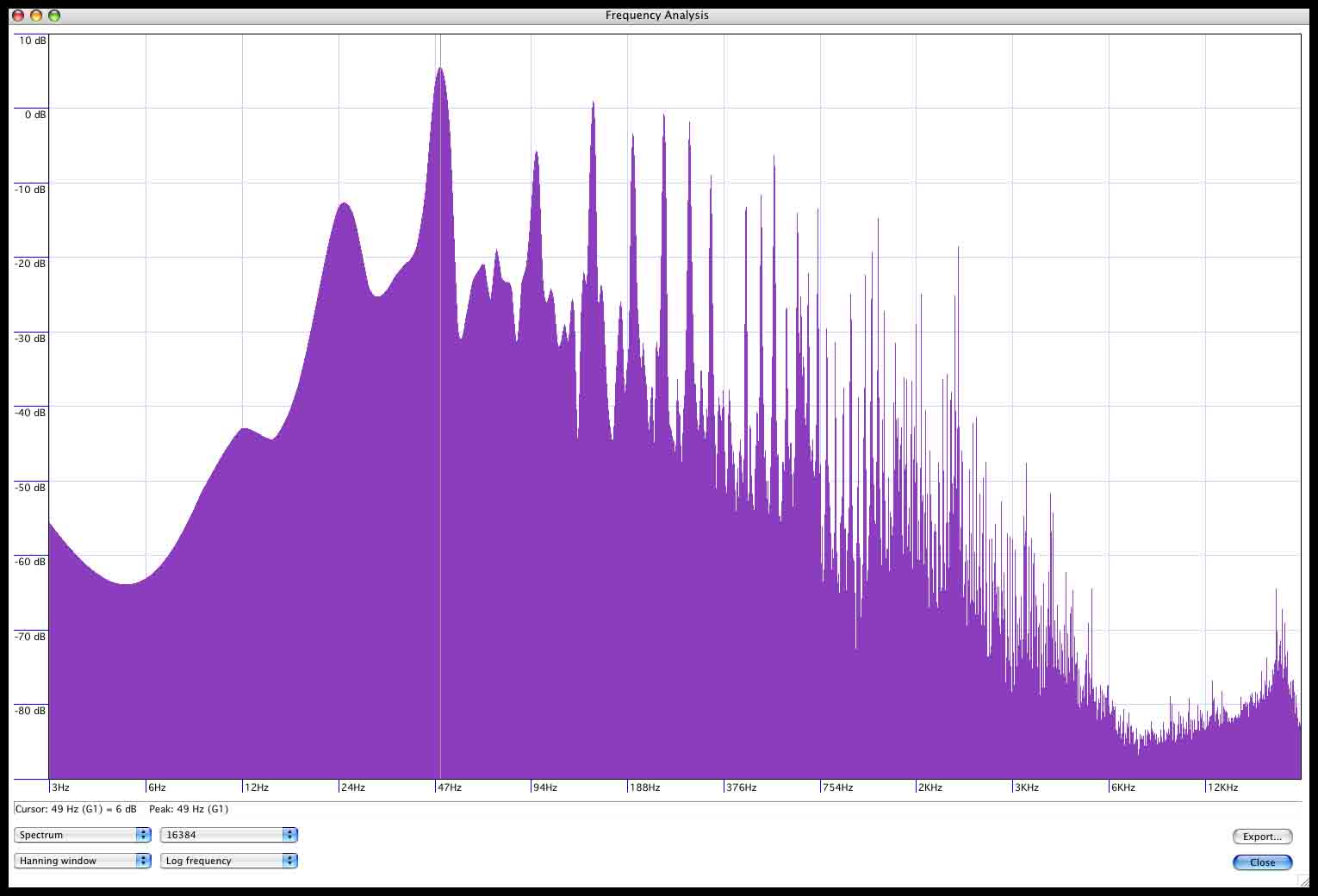

So far I’m been looking for a program with a good live-action spectrum-meter so I can learn what freq. the different noises are at (e.g. insects versus birds versus plane overhead versus different human voices). WaveLab and Audacity both have decent meters it seems. I should add, I work at a small non-profit, and investing (time and or money) in getting the big Adobe Products (like Audition) like overkill.

My big question is: is it really feasible to reduce or eliminate nature sounds for an amateur such as myself? My perception so far is that most automated noise removers just pick up a frequency set (like the hum of electricity or the camera hardware) and ditch that. But what about things with varying frequencies like insects or bird songs? Will there be too many artifacts to keep track of? Should I just post-amplify the hell out of it and try to get rid of the resultant post-amplified hardware noise?

Thanks,

Marcus