As DVDDoug above, you have to hit two specifications, not just one. ACX Check is our best guess at ACX’s Automated Robot. Peak, Loudness and Noise are straightforward. ACX Check is a condensed collection of already existing Audacity tools that were long, complicated and boring to use.

If you make it that far, it goes on to Human Quality Control for a theater check. That’s where you fall over if people hear you and run with hands over their ears. All the theatrical errors live here. Tongue ticks, stuttering, lip smacking, p-popping, slurring, etc. Those are rough for machines to test, but humans are a natural.

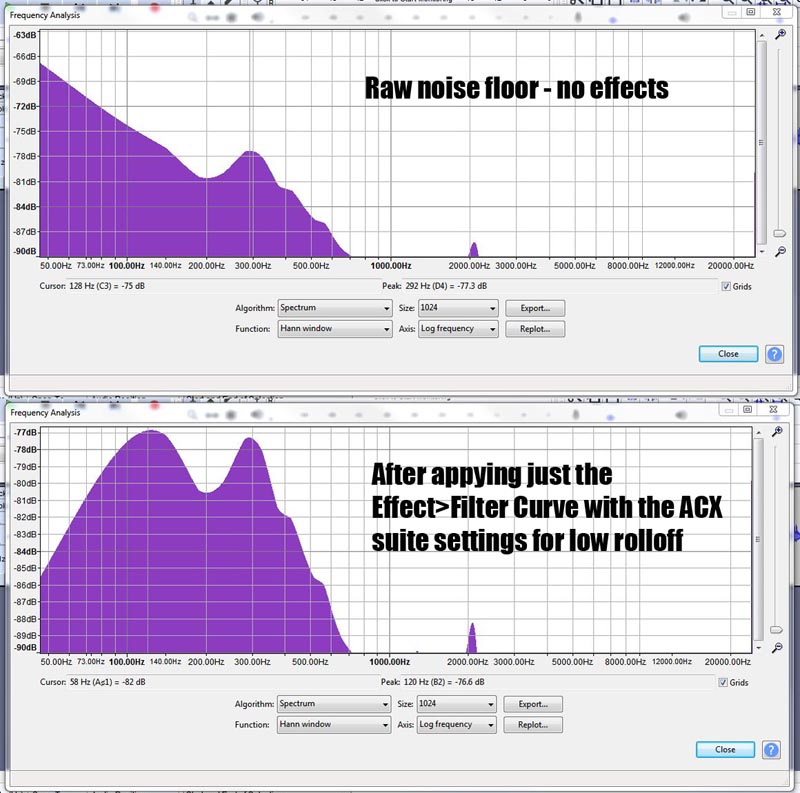

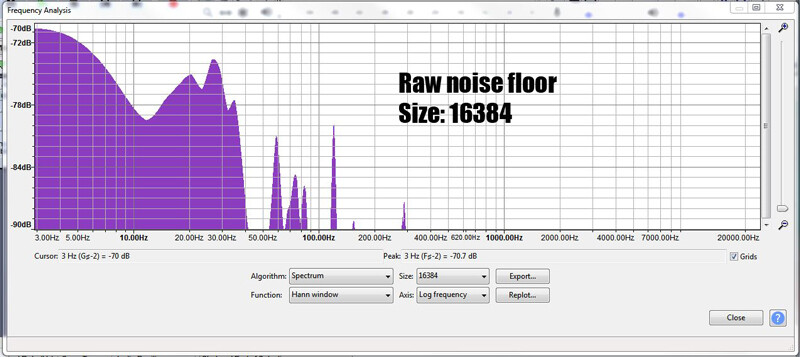

A word on the Mastering Suite. With the sole exception of Low Rolloff, if the tools aren’t needed, they don’t do anything. If you’re already loud enough, RMS Normalize has little or no affect. If your peaks are already quieter than -3dB, nothing happens. Low Rolloff will try to apply twice and you could get odd low-pitched tones in your show.

“…lush green mid-Hudson valley.” I think I remember this. I accused you of sounding like somebody had a gun and was forcing you to read. You can read any way you want, but if you don’t hit enough of the norms, you’ll put people to sleep.

The goal is to get people to pay money to sit in hard chairs and listen to you perform in real life. Take out the ACX step in the middle. Don’t hide behind the technology.

I still think it’s a good idea to read to kids in the library on Saturday. There’s just nothing like a direct, unfiltered connection to your audience to polish your technique. Or go listen to one and compare your work.

As you change your studio around, it’s also good to know that once you start, don’t change anything. There is no getting a new microphone in the middle of a book. We had two posters who moved houses in the middle of long books. It was not pretty.

Are you close enough to post a short test to ACX?

Koz