excerpt from therecordingrevolution.com

Some Tips For Mixing Acoustic Guitar, September 9, 2013 | Mixing, Plugins, Tips:Be Careful With Stereo Acoustics

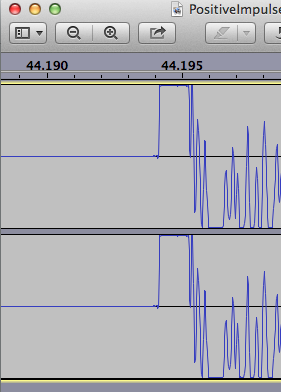

One final word of warning. If you are dealing with stereo recorded acoustic guitar tracks, spend some time making sure they are actually in phase. Collapse your mix to mono and see if the tone and fullness of the guitars goes away. If so, you might need to zoom into the waveforms and do some aligning.

One of the saddest things that can happen is that you have a nice sounding (seemingly) stereo acoustic guitar track in your mix when listening in stereo, but in mono (i.e. just about everywhere else except for in headphones) they become thin and harsh. This is why I usually avoid stereo guitars in the first place. But if you have them, make sure they are phase coherent in mono, not just in stereo.

Share

Short of following these recommendations (from above quote), can one simply zoom in and see if the ‘waves’ are aligned between left/right, that there is no offset? Is this what in-phase out-of-phase refers to?

— My Tascam DR05 portable recorder has two built-in microphones permanently postioned and I don’t think there is a delay/latency between them at all – nothing I can visually notice anyway. Of course they come in at different volumes/RMS which is easily balanced, but this “phase” issue/question, is this simply timing being off between channels (or tracks of a song)? A recording problem, depending on the equipment/setup, of different latency values, which I assume would not be a problem anyway (to a point)-- just creating an echo I would think.

Also, not sure what this excerpt is describing, to how only headphones produce stereo?