First of all I wanna say “Thank you” to people, who develops this fantastic programm. ![]()

I am a student and I am doing a univercity project related to a digital signal processing. I have one idea which I want to implement with Audacity, but I`m not sure it is possible or will I handle it through my programming skills. I want to ask you some questions about Audacity, spare my idea and, maybe, will ask some help later.

So, I want to write a programm, which analizes a simple music signal, the frequencies in it, suggest the notes and build a Music XML file. I am familiar with programming(C, C++, C#, Java, Python) more or less, depends on language and have already compiled the source code(in fact it’s the biggest solution I’ve ever seen) ![]()

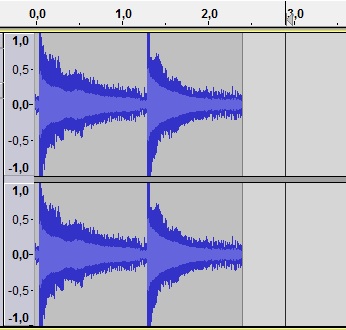

I guess there already are tools in Audacity that can analyze the frequencies in a signal. But is it possible with them to analyze the note? If I record one note, played on my guitar, will I be able to see the frequency of it? If not, is it possible(I think it is) to write some code that will determine that “main” frequency of a note in a signal recording, like a tuner, but not realtime as it does.

The next step will be to produce information like this. It is a MXML format, which is used to represent the “note sheet” from grafic view. I want to do the “reverse”. From music to MXML and to grafics.

<score-partwise>

<part-list>

<score-part id="P1">

<part-name>Music</part-name>

</score-part>

</part-list>

<part id="P1">

<measure number="1">

<attributes>

<divisions>1</divisions>

<key>

<fifths>0</fifths>

</key>

<time>

<beats>4</beats>

<beat-type>4</beat-type>

</time>

<clef>

<sign>G</sign>

<line>2</line>

</clef>

</attributes>

<note>

<pitch>

<step>C</step>

<octave>4</octave>

</pitch>

<duration>4</duration>

<type>whole</type>

</note>

</measure>

</part>

</score-partwise>

The tempo, rythm will be suggested at the beginning of the recording or after it becouse its important only for making MXML, not the sound analisys. So the user have only to set the values of tempo he wants to play and maybe some other options.

I don’t know how I will implement it to life(a plug-in) or completely another programm without unused functions of Audacity. And I don’t think I will do it well, if it hanlde with couple of notes or a scale it will be enough for me. It will already be a progress of my skills.

That’s how I see it. Please, tell me if that is realizable and how hard it will be to code. If is, I will ask you about inner structure of Audacity source code and wxWidgets(I coded mainly in console mode, used grafics in C#(XAML, Windows forms) so it is new for me)