Also could you help me sound like this please.

Probably not. He has different natural voice pitch than you do. Most of the quality is the same between the two performances. I think you should go with these simple corrections for a while and get used to producing the video.

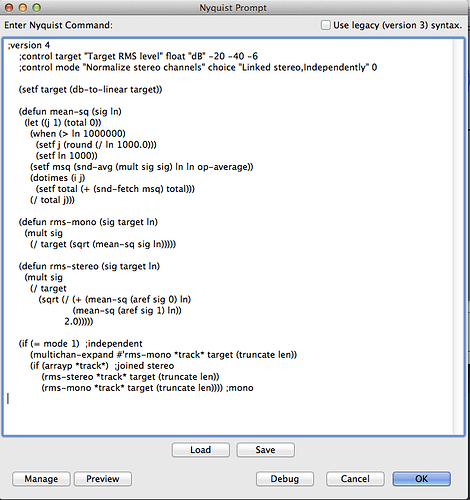

I applied a custom loudness tool from Steve called SetRMS. It’s a Nyquist program. It’s a paragraph of almost English words which you can copy and paste in Effect > Nyquist Prompt. It automatically sets the overall volume of the show (or whatever you select) and doesn’t care if some of the blue waves get too high. Scroll down to where it says “You can get SetRMS from me.”

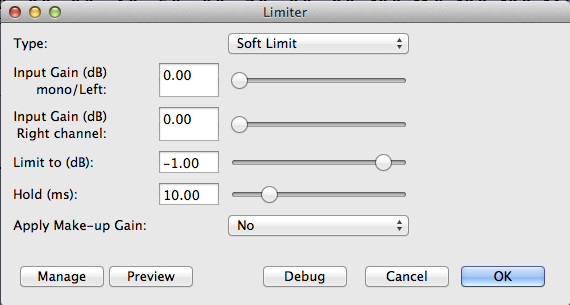

Then I ran Effect > Limiter with these settings.

Try those two on a longer performance. Try around 20 minutes or so. SetRMS was designed for audiobooks, but it should work just fine for you. If your voice changes volume too much, the finished segment may sound a little funny, but if you manage to stay even, it should sound OK.

I’m composing some of this as we go, so post back if you can’t follow a step.

Koz